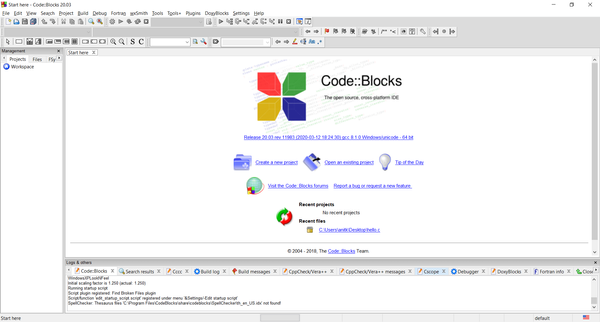

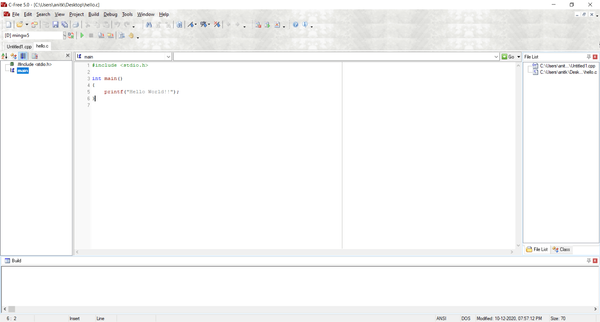

Suppose a1, b1, c1, and d1 point to heap memory and code has the following loop.

const int n=100000;

for(int j=0;j<n;j++){

a1[j] += b1[j];

c1[j] += d1[j];

}

This loop is executed 10,000 times via another outer for loop. To speed it up, I changed the code to:

for(int j=0;j<n;j++){

a1[j] += b1[j];

}

for(int j=0;j<n;j++){

c1[j] += d1[j];

}

Compiled on MicroSoft Visual C++ 10.0 , the first example takes 5.5 seconds and the double-loop example takes only 1.9 seconds.

Why?

Imagine you are working on a machine where n was just the right value for it only to be possible to hold two of your arrays in memory at one time, but the total memory available, via disk caching, was still sufficient to hold all four.

Assuming a simple LIFO caching policy, this code:

for(int j=0;j<n;j++){

a1[j] += b1[j];

}

for(int j=0;j<n;j++){

c1[j] += d1[j];

}

would first cause a1 and b1 to be loaded into RAM and then be worked on entirely in RAM. When the second loop starts, c1 and d1 would then be loaded from disk into RAM and operated on.

the other loop

for(int j=0;j<n;j++){

a1[j] += b1[j];

c1[j] += d1[j];

}

will page out two arrays and page in the other two every time around the loop. This would obviously be much slower.

You are probably not seeing disk caching in your tests but you are probably seeing the side effects of some other form of caching.

FLOP/s for different values of n on different processor